Z-GAN Image-to-Voxel Model Translation with Conditional Adversarial Networks

MIPT, Moscow, Russia

In ECCV 2018, 4th International Workshop on Recovering 6D Object Pose

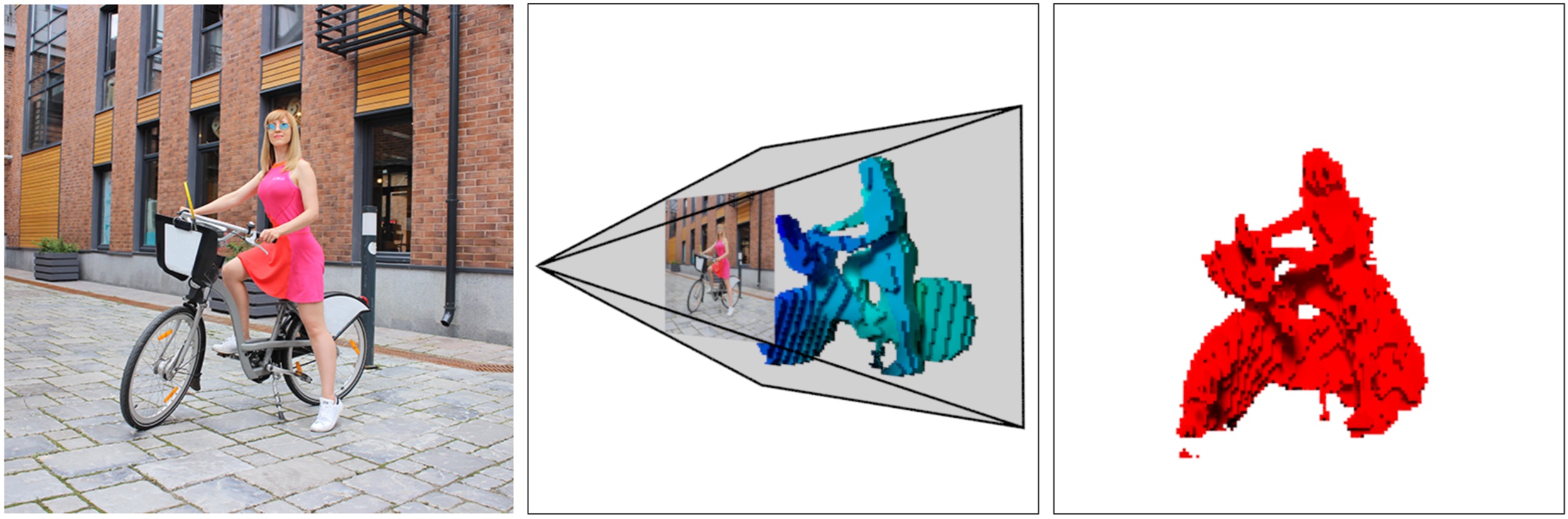

Examples of our image-to-voxel translation based on generative adversarial network and frustum voxel model. Input color image (left). Ground truth frustum voxel model slices colored as a depth map (middle). The voxel model output (right).

Abstract

We present a single-view voxel model prediction method that uses generative adversarial networks. Our method utilizes correspondences between 2D silhouettes and slices of a camera frustum to predict a voxel model of a scene with multiple object instances. We exploit pyramid shaped voxel and a generator network with skip connections between 2D and 3D feature maps. We collected two datasets VoxelCity and VoxelHome to train our framework with 36,416 images of 28 scenes with ground-truth 3D models, depth maps, and 6D object poses. We made the datasets publicly available . We evaluate our framework on 3D shape datasets to show that it delivers robust 3D scene reconstruction results that compete with and surpass state-of-the-art in a scene reconstruction with multiple non-rigid objects.

Voxelhome Dataset

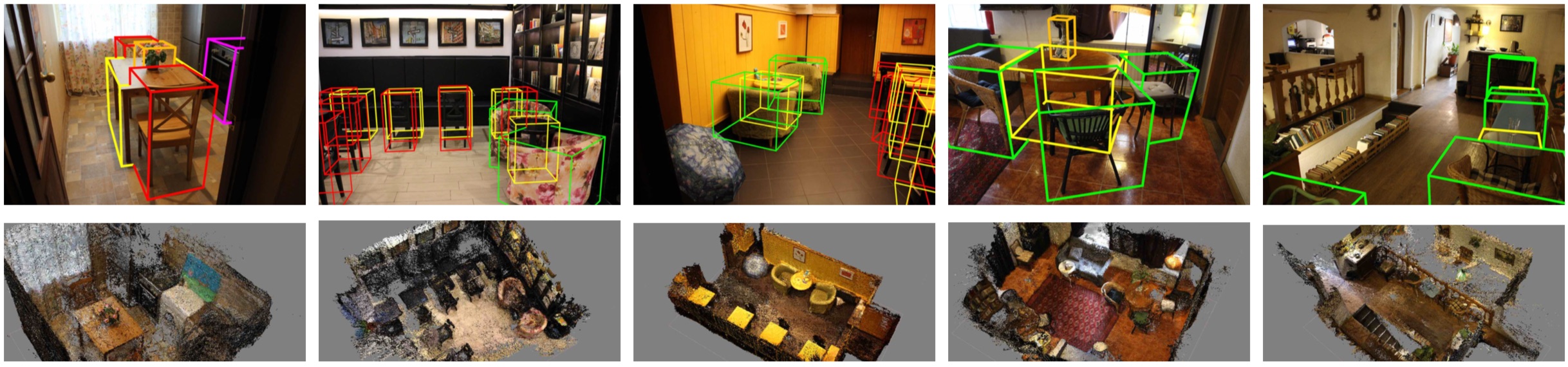

VoxelHome Dataset poster.

Our VoxelHome dataset presents 3D models of 7 indoor scenes, 17,580 color images with ground-truth 3D models, depth maps and 6D poses of nine object classes: chair, table, armchair, sofa, stool, cupboard, vase, washing machine, oven.

For download "VoxelHome Dataset" dataset write to vl.kniaz@gosniias.ru

Created date: 2022-10-13 10:17:39

Last update: 2022-10-13 10:17:39